Optimizing IT Infrastructure: What Actually Moves the Needle

Most guides about IT infrastructure optimization read like a textbook. Evaluate your systems, make a strategic plan, upgrade hardware, implement DevOps. Technically correct, practically useless. Teams don't need a checklist of buzzwords. They need to know which changes deliver real results and which are just overhead disguised as progress.

This post focuses on the optimizations that actually make a measurable difference for teams running production workloads, and how CubePath's infrastructure is designed to support them.

Start with What's Slow

Before optimizing anything, identify what's actually slow. Not what feels slow, not what a blog post says should be slow. Measure it.

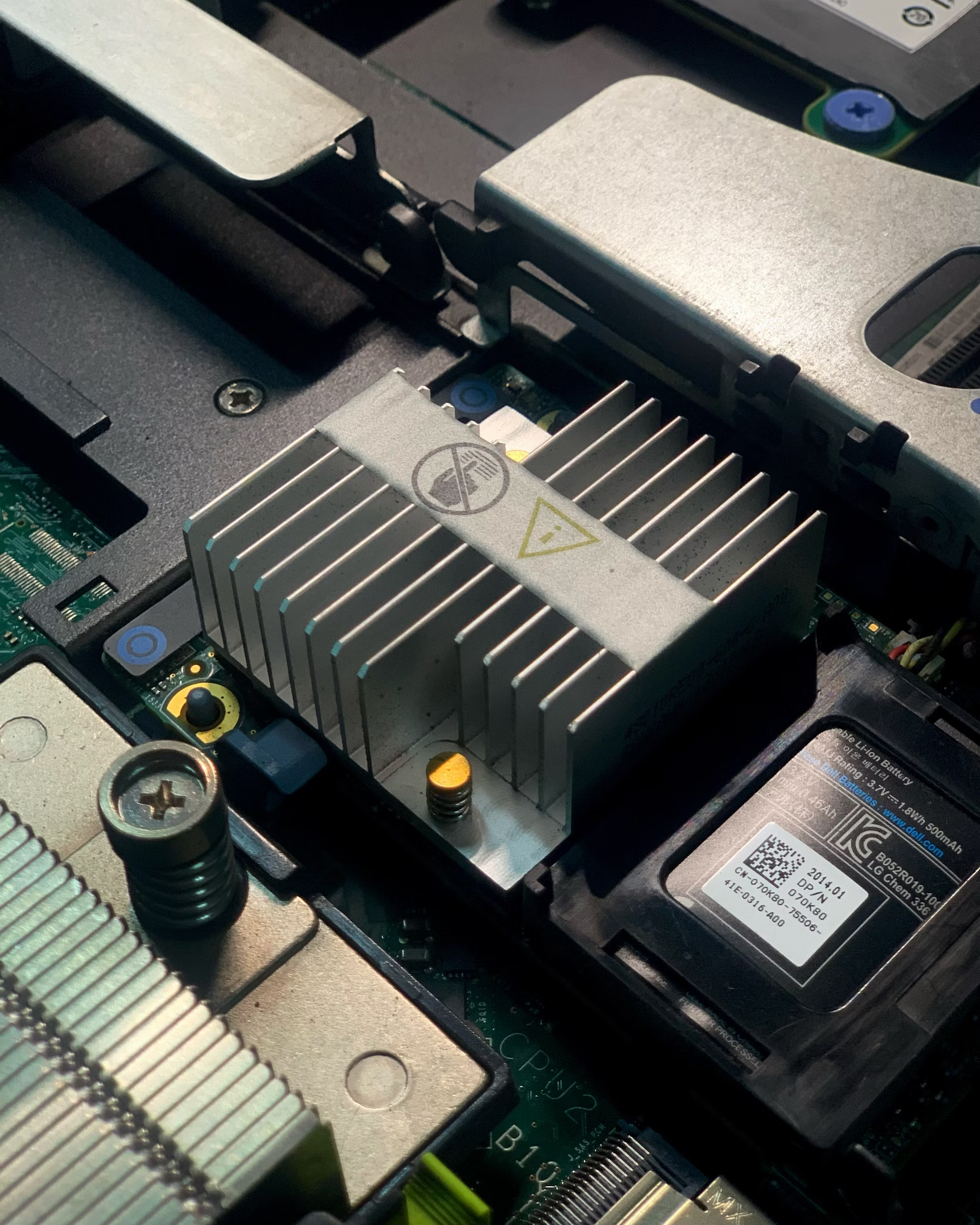

Storage latency. If database queries are slow, the first thing to check is the storage layer. Spinning disks and even standard SSDs add milliseconds to every I/O operation. Moving to NVMe storage, which is standard on all CubePath VPS and bare metal servers, typically reduces database query latency by 50-80%. This single change often has more impact than any application-level optimization.

Network overhead. If services communicate across the public internet when they could be on a private network, every API call between them adds unnecessary latency and exposes traffic to the public. CubePath's private networking with MTU 9000 keeps inter-service communication fast and off the public internet.

CPU bottlenecks. If the application is CPU-bound, running on shared infrastructure where CPU time is contested means performance varies unpredictably. Moving the workload to a Dedicated CPU instance or bare metal eliminates the variability.

Wrong instance type for the workload. A memory-heavy database running on a CPU-optimized instance wastes money and underperforms. Matching the workload to the right instance type (Shared CPU for lightweight tasks, High Frequency for latency-sensitive work, Dedicated CPU for sustained compute) is one of the simplest and most effective optimizations.

Automate What Humans Shouldn't Be Doing

Manual server management doesn't scale. If deploying a new server involves SSHing in, running commands, configuring services, and hoping the documentation is up to date, the process is fragile and slow.

Infrastructure as Code. Define servers, networks, firewalls, and DNS in Terraform. When infrastructure is code, it's version controlled, peer reviewed, and reproducible. CubePath's Terraform provider supports VPS, projects, networks, and SSH keys, so the entire stack can be defined in files and applied with a command.

CLI and API automation. CubeCLI and the CubePath API allow scripting everything that the panel does. Provisioning a server, configuring a Load Balancer, managing DNS records. If it can be done manually, it can be automated.

Scheduled operations. Backups, log rotation, certificate renewal. These should never depend on a human remembering to do them. Automate the routine work so the team focuses on the work that requires judgment.

Scale the Right Way

Vertical scaling (bigger server) works until it doesn't. There's always a ceiling, and the jump from one tier to the next gets expensive. Horizontal scaling (more servers behind a Load Balancer) is more resilient and often more cost-effective.

CubePath Load Balancers distribute traffic across multiple VPS instances with automatic health checks. If an instance fails, traffic routes to the healthy ones. Combined with hourly billing, this means scaling up for a traffic spike costs exactly what those extra hours of compute are worth, and scaling back down is as simple as destroying the extra instances.

For stateless workloads (web servers, API backends, workers), horizontal scaling behind a Load Balancer is almost always the better choice. For stateful workloads (databases, persistent queues), vertical scaling on Dedicated CPU or bare metal with proper replication provides both performance and reliability.

Monitor Before You Need To

The worst time to set up monitoring is during an outage. CubePath Cloud Alerts allow setting up resource-based alerts (CPU, memory, disk, network) with notifications to Slack, Email, or other channels.

Knowing that a disk is 85% full before it hits 100% is the difference between a planned expansion and an emergency at 3 AM. Knowing that CPU usage has been climbing steadily for the past week gives time to investigate and optimize before users start complaining.

Right-Size the Infrastructure

Over-provisioning is comfortable but expensive. Under-provisioning is cheap until it causes an outage. The goal is matching resources to actual usage.

CubePath's hourly billing makes right-sizing low risk. Start with a smaller instance, monitor actual resource usage, and resize up if needed. For variable workloads, the combination of Load Balancers and hourly billing creates genuinely elastic infrastructure. More instances during peak hours, fewer during off-hours, and the bill reflects actual usage rather than peak capacity.

The Infrastructure That Supports All of This

Optimization isn't just about what runs on top of the infrastructure. The infrastructure itself matters:

- NVMe storage everywhere removes the most common I/O bottleneck

- Private networking with MTU 9000 makes inter-service communication fast and free

- Multiple instance types allow matching hardware to workload characteristics

- Hourly billing removes the penalty for experimentation and right-sizing

- Load Balancers with health checks enable horizontal scaling without custom HAProxy setups

- Terraform, CLI, and API make infrastructure reproducible and automatable

- Cloud Alerts provide proactive monitoring without building a monitoring stack

- DDoS protection included means security doesn't require additional configuration

The best optimization is choosing infrastructure that doesn't create bottlenecks in the first place.